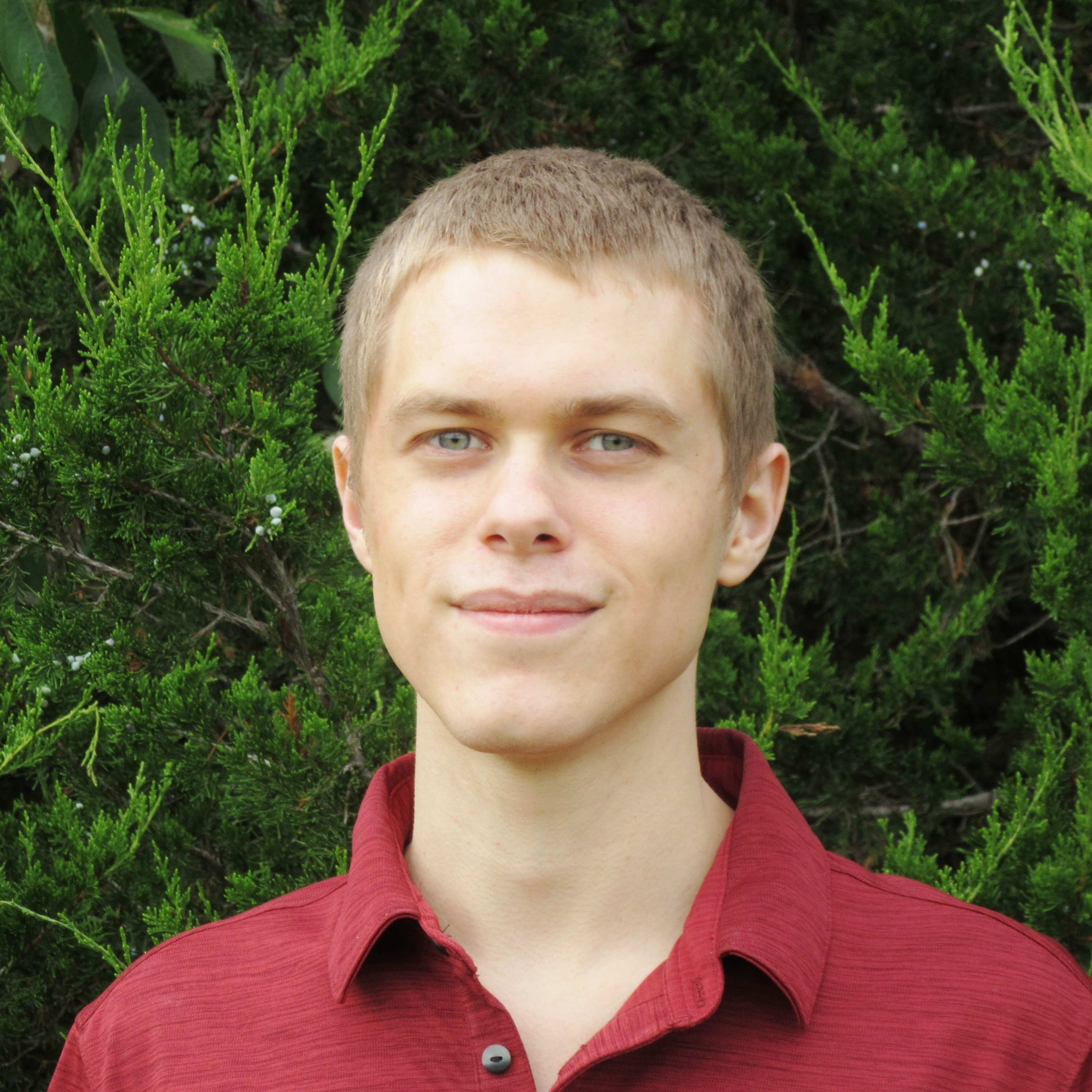

Joe Carlsmith on How We Change Our Minds About AI Risk

Joe Carlsmith joins the podcast to discuss how we change our minds about AI risk, gut feelings versus abstract models, and what to do if transformative AI is coming soon.

View episodeExamines threats that could permanently curtail humanity's potential or cause human extinction. Includes nuclear warfare, engineered pandemics, climate catastrophe, unaligned AI, and other global catastrophic risks that threaten civilization's long-term survival.

Joe Carlsmith joins the podcast to discuss how we change our minds about AI risk, gut feelings versus abstract models, and what to do if transformative AI is coming soon.

View episode

Dan Hendrycks joins the podcast to discuss evolutionary dynamics in AI development and how we could develop AI safely.

View episode

Roman Yampolskiy joins the podcast to discuss various objections to AI safety, impossibility results for AI, and how much risk civilization should accept from emerging technologies.

View episode

Nathan Labenz joins the podcast to discuss the economic effects of AI on growth, productivity, and employment.

View episode

Nathan Labenz joins the podcast to discuss the cognitive revolution, his experience red teaming GPT-4, and the potential near-term dangers of AI.

View episode

Connor Leahy joins the podcast to discuss the state of the AI.

View episode

Connor Leahy joins the podcast to discuss GPT-4, magic, cognitive emulation, demand for human-like AI, and aligning superintelligence.

View episode

Lennart Heim joins the podcast to discuss how we can forecast AI progress by researching AI hardware.

View episode

Liv Boeree joins the podcast to discuss poker, GPT-4, human-AI interaction, whether this is the most important century, and building a dataset of human wisdom.

View episode

Tobias Baumann joins the podcast to discuss suffering risks, space colonization, and cooperative artificial intelligence.

View episode

Tobias Baumann joins the podcast to discuss suffering risks, artificial sentience, and the problem of knowing which actions reduce suffering in the long-term future.

View episode

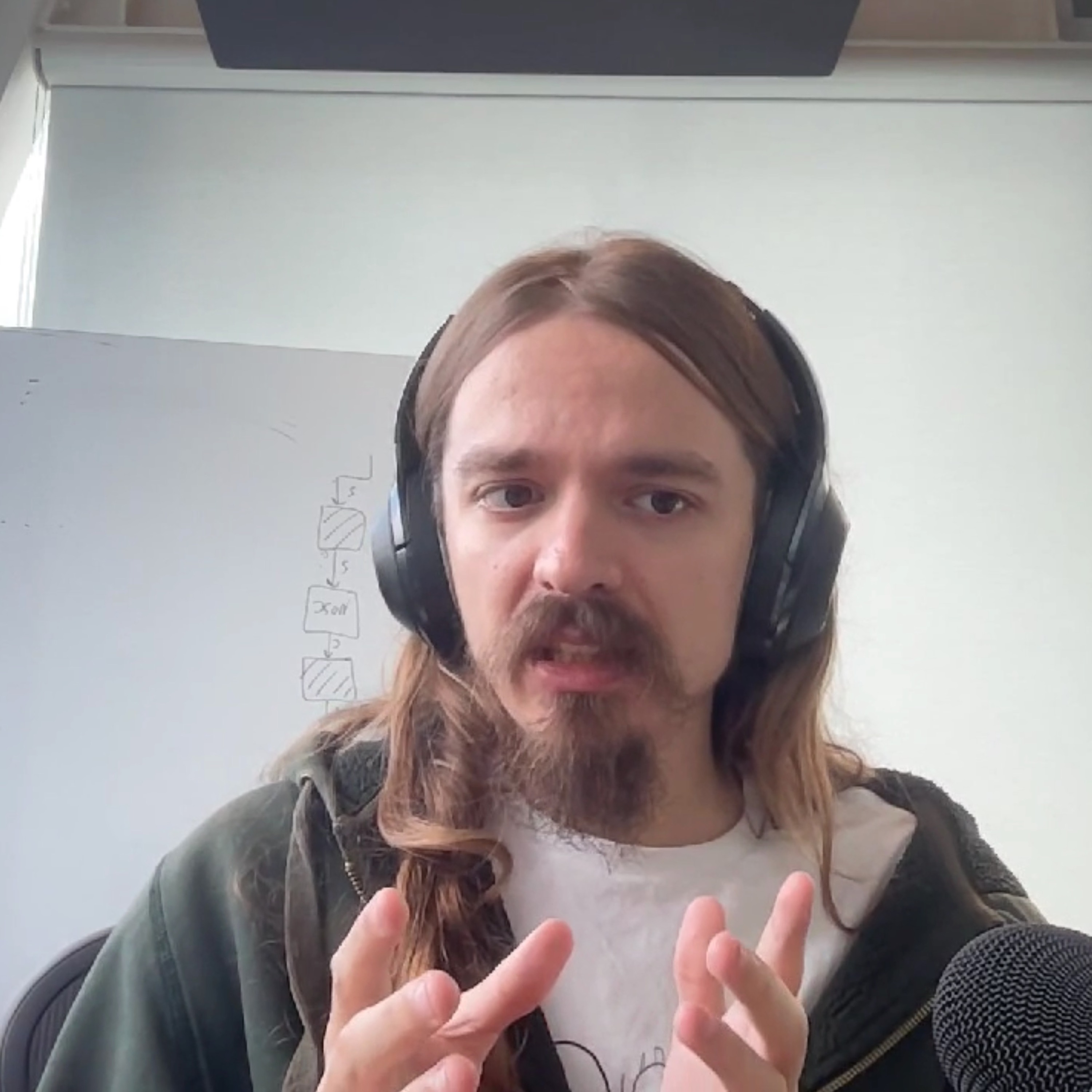

Neel Nanda joins the podcast to talk about mechanistic interpretability and how it can make AI safer.

View episodeNo matter your level of experience or seniority, there is something you can do to help us ensure the future of life is positive.